Reading the Solana Tape: Real-World Tips for Tracking SOL Transactions and On-Chain Signals

February 26, 2025 6:08 pmWhoa! That felt like the right way to start. I was staring at a block history the other night and it hit me—Solana moves fast, like highway fast. Really? Yeah, really. My instinct said something was off about typical explorer views; they showed numbers, but not the story behind them.

Okay, so check this out—I’ve used a handful of explorers while building dashboards and troubleshooting wallet issues. Initially I thought all explorers were roughly the same, but then I realized their data slices and UX choices change how you interpret activity. Actually, wait—let me rephrase that: the same raw data can suggest very different narratives depending on how it’s presented. On one hand, a transaction list looks boring and clinical; on the other hand, with the right context, that same list can reveal stress points, bot patterns, or legitimate liquidity moves.

Here’s the thing. Solana’s high throughput makes the logs dense. Hmm… the density can hide signals. Short bursts of volume appear and vanish. You need to zoom out and zoom in. Sometimes the immediate pattern tricks you into thinking something is trending when it’s just a single bot sweeping a pool.

My first real aha moment came when I chased a failed swap that ate fees across several accounts. I thought it was user error. Then I saw the ledger and realized a program upgrade changed signatures subtly and a bunch of third-party bots misfired. That taught me to always validate program IDs and not rely purely on token tickers. I’m biased, but that part bugs me—watching gas get wasted is painful.

Practical techniques I use when reading Solana transaction flows

Short checks first. Look at block heights and timestamps. Then scan for program IDs and repeated signer keys. This sequence filters noise. Seriously? Yes—because many alerts are noise. On the technical side, program IDs tell you whether the activity is from a DEX, a staking program, an NFT candy machine, or some custom contract; that’s a huge clue.

Start with a transaction detail. Ask: who signed it, what programs executed, how much SOL moved, and were any token accounts created? Medium-sized changes can suggest user-driven activity. Large, rapid on-chain swaps generally signal liquidity shifts or arbitrage. Long thoughts here: cross-checking that activity with recent GitHub commits, upgrade proposals, or Twitter threads (if you trust social sources) can confirm whether a change is organic or the result of an upgrade or exploit attempt.

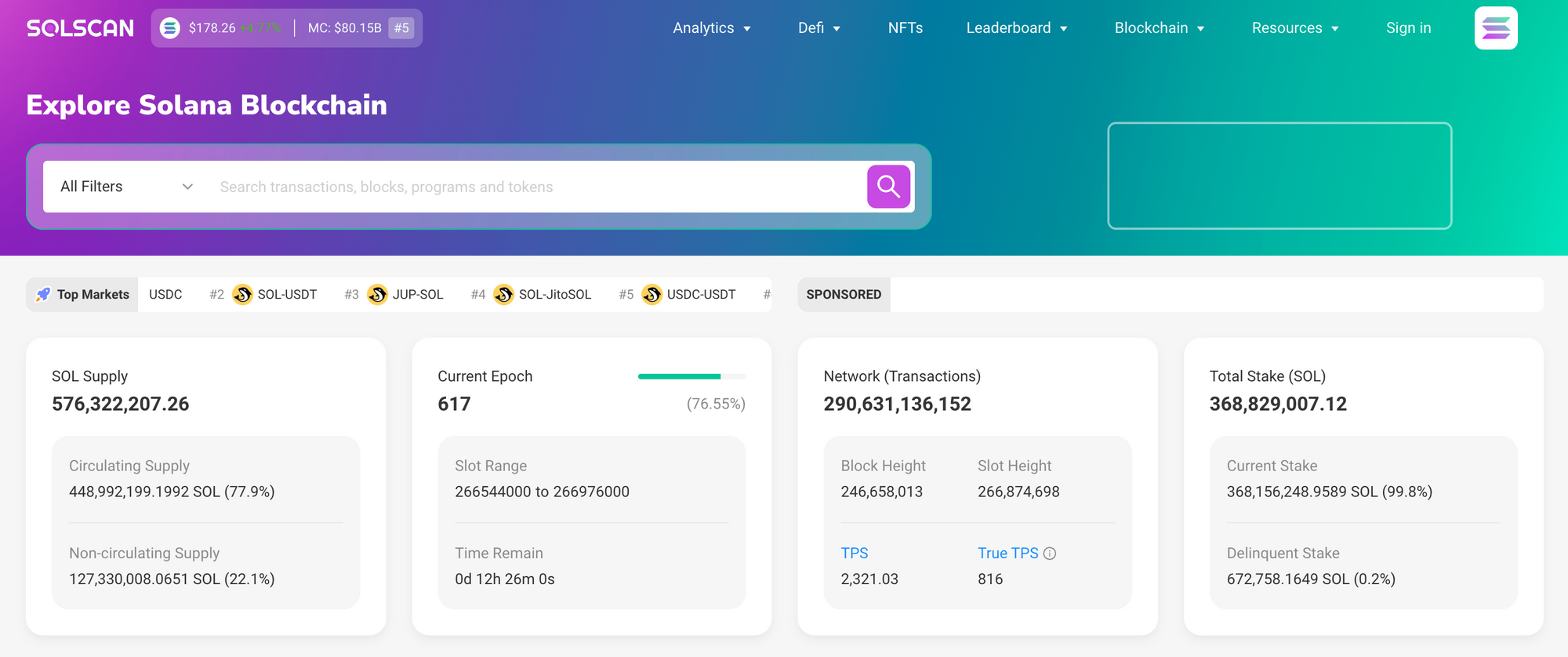

For everyday reconnaissance I lean on one tool often. If you want a clean, fast way to parse Solana transactions and program calls, check out solscan explorer. It shows program traces and token transfer breakdowns in a way that helps me spot anomalies quickly. That link’s my go-to because the timeline and decoded instruction views save me time—time is money, especially when you’re triaging a possible exploit.

Don’t just stop at a single transaction. Look for patterns across nearby blocks. Somethin’ like repeated CreateAccount calls or lots of tiny transfers clustered to a single address are red flags. They could mean dusting attacks, mixer behavior, or botnet churn. Conversely, a steady cadence of staking deposits across multiple wallets often means coordinated staking campaigns—maybe an institutional participant warming up.

Also, pay attention to rent-exempt account creations. Those aren’t free. They indicate long-lived accounts and usually, longer engagement. Double-check token mints and metadata when NFTs are involved; metadata fields can contain URLs or signs of front-running mint scripts. I once missed that detail and lost an hour tracing fake metadata links. Live and learn.

When you run analytics, keep a lightweight pipeline. Collect basic fields: slot, block time, tx signature, signer keys, program IDs, token transfer records, lamports in/out, rent-exempt flags. Medium complexity queries let you group by program or signer. Longer analyses—say, clustering wallets by shared signers or SPL token patterns—require more compute but are often where you find real insights, like a bot cluster repeatedly sandwiching trades across pools.

On the tooling front I use a few heuristics. Watch for improbable gas usage spikes relative to the program. Also look for unusual instruction sequences, like repetitive CPI chains calling the same program across different signers. That combination often signals automated strategies. Another tip: track token account creation rates for a mint. A sudden spike usually means an airdrop claim or a new mint phase. These signals aren’t absolute, but they narrow your hypotheses.

Now, a bit of honesty. I’m not 100% sure about every pattern I call. There’s ambiguity. On one hand, you might see a whale splitting transactions to duck fees; on the other hand, you might see a developer stress-testing a program. Initially I thought splitting always meant stealth, but now I know some legitimate services shard transactions for UX reasons. The point is: hypothesis first, then verify.

One common mistake is trusting token symbols at face value. Token renaming and copied metadata are common. Always validate the mint address. If you spot a mint with many zero-decimals or odd supply fields, dig deeper. Also, cross-check on-chain announcements; a legit project will often announce a token migration or burn. If you don’t see that, consider reaching out via official channels (and verify those channels).

For scaling alerts, I prefer threshold and pattern-based triggers. For example: trigger when a single signer creates more than N token accounts in M minutes, or when a program sees a 3x surge in CPI calls relative to a rolling average. Those rules reduce false positives. However, adjust them—different programs have unique baseline behaviors, and thresholds need to be tuned to context.

Here’s a thing I fuss over: timestamp reconciliation. Solana’s high throughput sometimes creates apparent race conditions when mapping off-chain events to on-chain confirmations. If you’re reconciling logs with user actions, allow leeway. That lag can be maddening, but it matters less for long-lived patterns and more for immediate incident response.

Also—don’t ignore the social layer. Community chatter often flags strange behavior before explorers surface neat summaries. That said, social noise is abundant. Be skeptical. My rule: social tip-offs inform what to look at on-chain, they don’t replace on-chain verification. This balance keeps you grounded and prevents wild conjectures.

I’ve learned to document investigations succinctly. Start with a hypothesis sentence, then list the key on-chain facts, then state the conclusion and confidence level. Short notes save other folks (and future-you) a lot of time. Bonus: structure you can hand off to non-technical teammates without drowning them in raw JSON.

FAQ

How do I spot bot activity in SOL transactions?

Look for repetitive patterns: many txs with identical instruction footprints, rapid sequential slot entries, and clusters of tiny transfers. Also watch for identical signer sets across multiple wallets. Those signs together usually indicate automation rather than organic user behavior.

What’s the easiest check when something looks suspicious?

Validate the program IDs and mint addresses. Then check the instruction decode to see what the program actually did. If the decoded instruction or program doesn’t match the project’s public docs, that’s a red flag. Oh, and confirm whether the activity coincides with a program upgrade—sometimes it’s benign.

Which explorer do you recommend for quick triage?

I rely on fast, readable explorers for initial triage; one of my favorites is linked above because it surfaces decoded instructions and token transfer breakdowns quickly. For deeper dives, pair explorer views with RPC traces or a local indexer so you can run historical queries and clustering.

So yeah—reading Solana is part art, part forensics. You need curiosity and discipline. Something about the chain’s pace rewards pattern recognition, but it punishes assumptions. I’m biased toward tools that let me slice data quickly. Sometimes a tiny visual cue tells me more than a spreadsheet. Other times you have to slog through raw logs. Either way, stay skeptical, stay nimble, and keep your alert thresholds tuned. And hey—if you’re getting started, bookmark a reliable explorer and practice on a few old incidents; it’s the best learning curve I know.